Projects

From EEG diffusion models to reinforcement learning environments and training-free model merging, our work spans neuroscience, machine learning, and agentic AI.

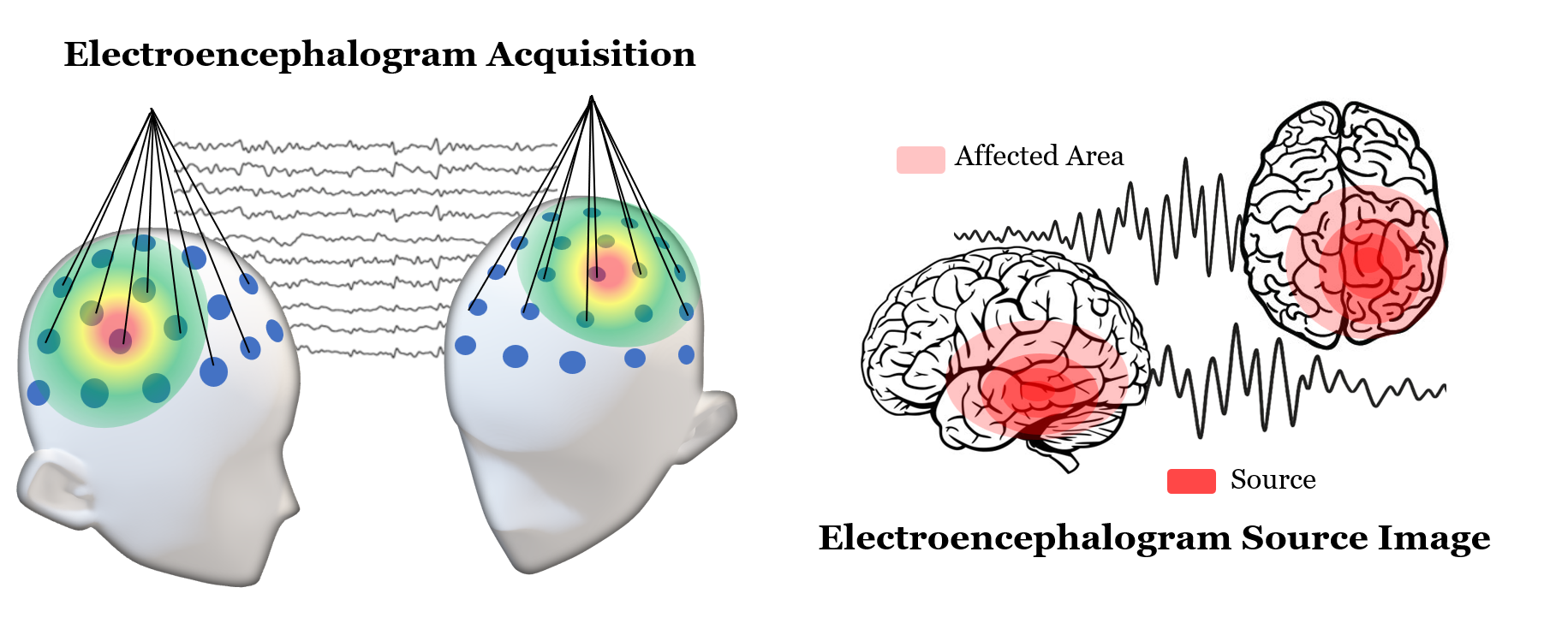

EEGDM — EEG Representation Learning via Generative Diffusion Model

ActiveA self-supervised diffusion model for learning rich EEG signal representations, featuring a specialized State-Space Model architecture and latent fusion transformer — applied to downstream tasks such as seizure detection.

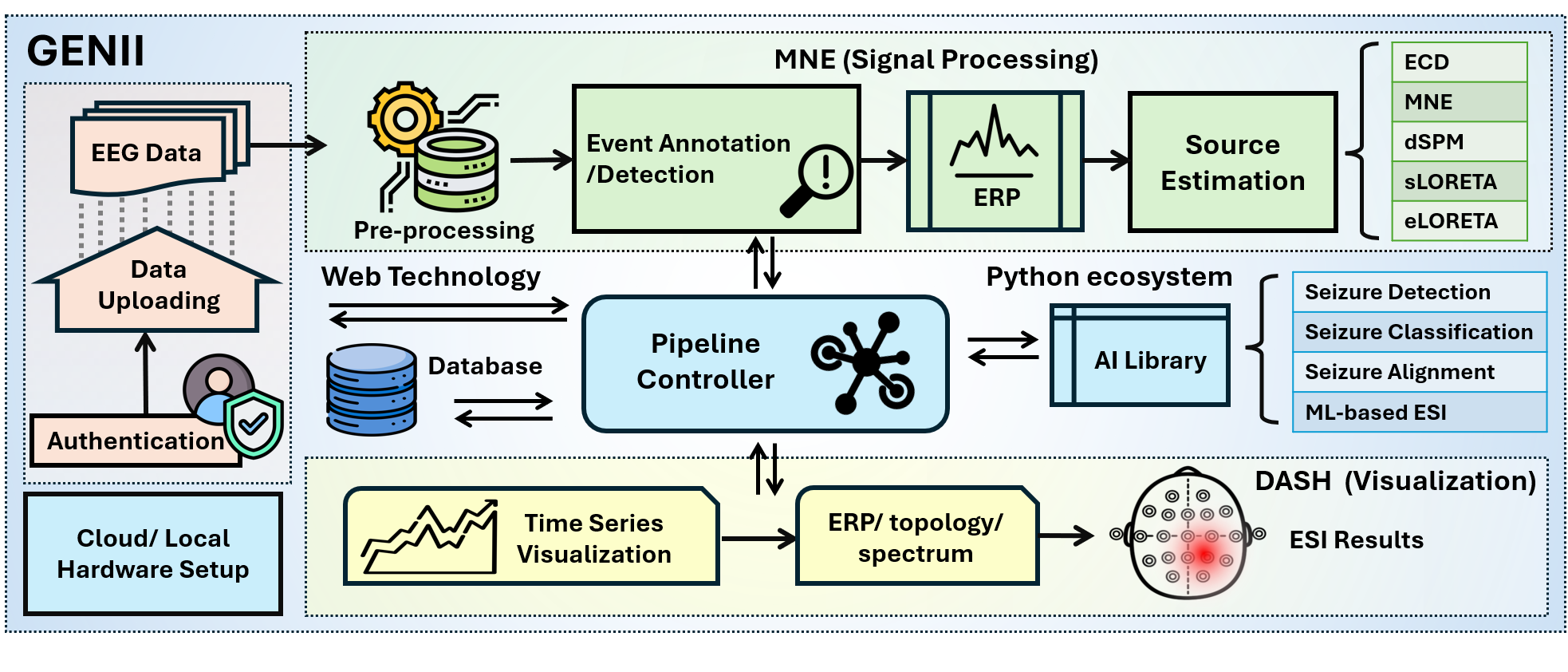

View on GitHubGENII — EEG & Brain Source Imaging on Cloud with AI

ActiveAn open-source platform democratising EEG and brain source imaging for epilepsy diagnosis and neurological research, integrating AI and cloud computing to make advanced neuroimaging accessible in clinical practice.

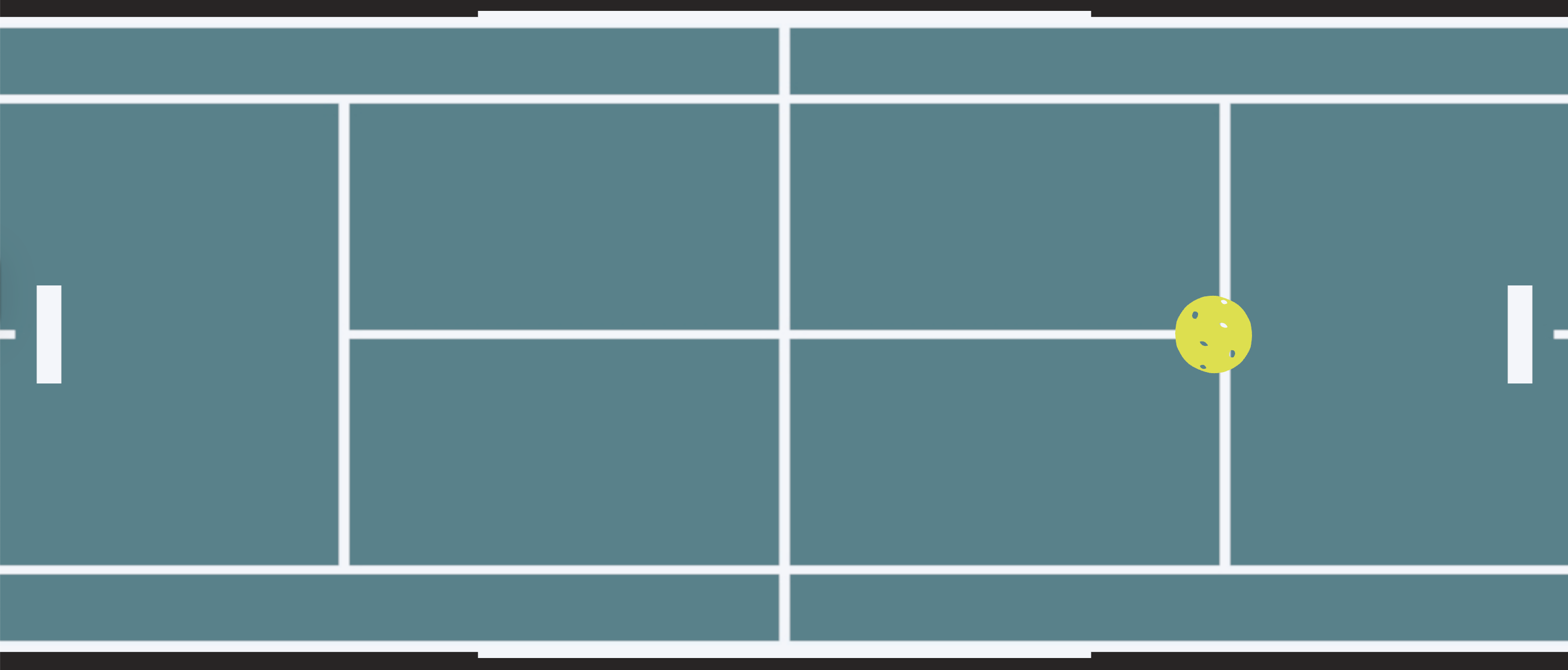

dPickleBall — Reinforcement Learning Environment for Pickleball AI

ActiveA physics-based reinforcement learning environment simulating competitive pickleball gameplay, where AI agents learn to play and compete autonomously — serving as the challenge domain for a national AI, HCI & BCI competition.

NeuroMerging — Training-Free Model Merging via Neuronal Subspace

ActiveA training-free framework for merging multiple fine-tuned models across different tasks, mitigating performance degradation through neuronal subspace decomposition to enable efficient multi-task model composition.

View on GitHubEfficient Agentic AI Adaptation

Lightweight adaptation strategies enabling agentic AI systems to generalise rapidly across diverse tasks and environments.

Safety & Security in Agentic AI

Research into alignment, robustness, and adversarial defence for autonomous AI agents operating in high-stakes settings.

Agentic AI in Business & Enterprise

Deploying multi-agent workflows for real-world enterprise automation, decision support, and intelligent process orchestration.